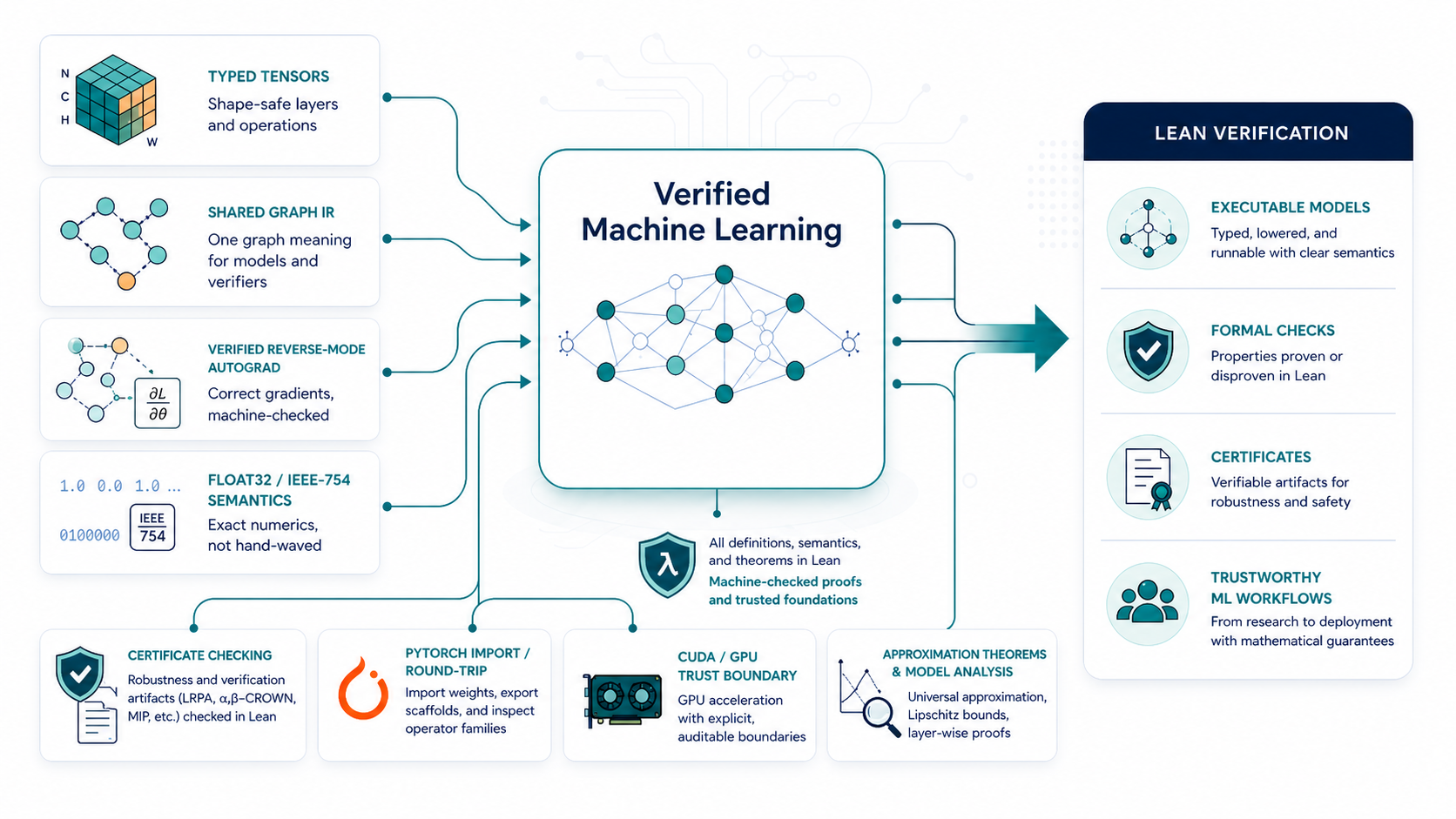

TorchLean formalizes neural network infrastructure in Lean 4, connecting typed tensor and layer specifications, runnable training examples, graph IR semantics, floating-point contracts, CUDA trust boundaries, and artifacts that Lean checkers can inspect.

The goal is a practical bridge between modern ML workflows and formal reasoning: models can be executed, lowered, inspected, imported from PyTorch-style pipelines, and checked against explicit mathematical contracts.

What TorchLean Gives You

Where It Fits

TorchLean sits between the software people already use and the proof artifacts they want to trust. The project is written in Lean 4 and uses a PyTorch-style surface where that makes model code easier to read. For the Python ecosystem, see the official PyTorch documentation; for Lean itself, start with the Lean documentation.

Paper / Citation

@misc{george2026torchleanformalizingneuralnetworks,

title={TorchLean: Formalizing Neural Networks in Lean},

author={Robert Joseph George and Jennifer Cruden and Xiangru Zhong and Huan Zhang and Anima Anandkumar},

year={2026},

eprint={2602.22631},

archivePrefix={arXiv},

primaryClass={cs.MS},

url={https://arxiv.org/abs/2602.22631},

}